Arabian Business

Palestinian users were unfairly targeted by post moderation and technical issues on Facebook, WhatsApp and Instagram throughout the May 2021 conflict with Israel, Meta said on Wednesday.

In comments made to reporters, the tech company said that Arabic content had been hit by post restrictions, hashtag removal, and resharing blocked throughout the crisis, while Hebrew content remained relatively unaffected in comparison.

Violence overtook Israel and Palestine throughout most of May 2021, triggered by protests in East Jerusalem by Palestinians over the eviction of six Palestinian families. The crisis saw at least 256 Palestinians killed, and 13 Israelis. Following the crisis, Meta contracted Business for Social Responsibility (BSR), a non-profit organisation focused on assessing social responsibilities by businesses, to conduct a due diligence exercise on the impact of the company’s processes and policies on the conflict.

Meta said that in the lead-up to the outbreak of violence on May 10, a “global technical glitch” occurred, preventing users from re-sharing posts, including Israel and Palestine. Miranda Sissons, global human rights director for Meta said that this was “not intentional or targeted but a global error that affected tens of millions of people.”

Shortly after post-resharing was blocked, the Al-Aqsa hashtag, which pertained to a mosque at the centre of the crisis, was also blocked by a content reviewer, Meta said. Sissons noted that the person who made the error is “also human”, and that the hashtag block was fixed once they were made aware of the issue.

There was an under-enforcement of Hebrew content and an over-enforcement for Arabic content throughout the crisis. Palestinian journalists reported that their WhatsApp accounts had been blocked, which again was explained as unintentional and rectified after Meta was notified.

Arabic content also received violations at a significantly higher rate than Hebrew content, which could be attributed to Meta’s policies around legal obligations relating to designated foreign terrorist organisations, the company said.

“We are a US company that has to comply with US law,” Meta said in its own report.

Users were also given false strikes, leading to significantly lower visibility and engagement, after posts were removed for violating content policies. The human rights consequences were severe. Various rights such as freedom of expression, freedom of association and more were curtailed, with journalists and activists especially impacted, the BSR report found.

BSR’s report focused on all of Meta’s products and their use throughout the Israel-Palestine crisis, including Facebook, Instagram and WhatsApp.

The report found that Meta’s actions in May 2021 appeared to have had an adverse effect on the human rights of Palestinian users’ freedom of expression, freedom of assembly, political participation, and non-discrimination, and, ultimately, on the potential of Palestinians to share information and insights about their experiences as they occurred.

This was reflected in conversations with those effected, many of whom shared with BSR their view that Meta appears to be “another powerful entity repressing their voice.”

The review stated that Meta’s role is not to arbitrate the conflict, but rather to generate and enforce policies to mitigate the risk that the platforms could aggravate by silencing voices, reinforcing power asymmetries, or allowing the spread of content that incites violence.

Although BSR did highlight good practice from Meta’s part, people still felt repressive impacts, even though the errors were later fixed.

There were other errors and issues that happened throughout the period that were extensively highlighted in the report. However, after a detailed and complete review, BSR identified unintentional bias while also highlighting good practice. There were 21 recommendations that were given to Meta to take action on to address its adverse human rights impact.

Out of these recommendations Meta are currently implementing 10, partly implementing four, assessing the feasibility of six, and chose not to take action on one.

Sissons highlighted that good human rights is not a compliance exercise. “It helps us learn to improve our products and policies,” she said.

Latest News

-

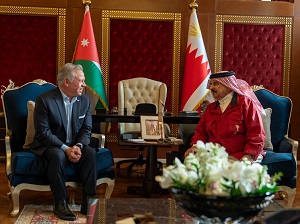

King meets with Bahrain monarch, discusses regional developments

King meets with Bahrain monarch, discusses regional developments

-

Southern Lebanon paramedics risk deadly Israeli strikes to do their work

Southern Lebanon paramedics risk deadly Israeli strikes to do their work

-

Israel says killed Iran's security chief Larijani

Israel says killed Iran's security chief Larijani

-

Missile debris kills one in Abu Dhabi as Iran presses Gulf attacks

Missile debris kills one in Abu Dhabi as Iran presses Gulf attacks

-

Drone, rocket attack targets US embassy in Baghdad

Drone, rocket attack targets US embassy in Baghdad